About this tutorial

Despite being lengthy, this tutorial is in fact easy and fast, I have split it to parts so that you can get down to business instantly if you need to.

Worth mentioning is that i think this simple procedure presents itself as rocket science, it is not, so advise you to dive in (experimenting on a separate computer first may be a good idea), again i assure you it is VERY STRAIGHT FORWARD, the length is because i am elaborating to make it easy.

Disclaimer

This is an effort to put all the information i need about bcache in one place for my referance and your benefit, but please beware, bcache should be run with backup (You will have to come up with things as raid will render the cache redundant for example and rsync for big files might make your CPU do a lot of work), in any case, i am not responsible and will not be held liable for any damage you may endure.

SSDs are the future

When it comes to SSDs, I would say they have come a long way in terms of price, and one day they will be replacing hard drives, I have no doubt about that, there is no advantage in a hard drive that an SSD can’t eventually match (You might argue that TBs written, maybe, but have you tried to check the reliability of a hard drive stressed to the level needed to achieve those TBs written ?).

What is bcache for

Spinning hard drives are fast beasts when it comes to sequential reads, but when it comes to random reads where the head has to go seek the data, they become very very slow, you can be reading at 200MB/s and suddenly drop to 2MB/s, While SSDs do not suffer this much from random reads, slower than sequential, yes, but nothing close to the gap you see in spinning disks, in a spinning disk, the speed difference can be 100 fold OR MORE.

History (Windows)

The earliest attempt that i can remember was Intel robson (2005), Intel robson or intel turbo memory was a feature in the Core 2 CPUs, but i don’t think it made it up to the Core I, it was not very popular and for a good reason, at the extra cost, OEMs could add more ram, not only would it be better for marketing, it also made more sense, as Windows was already introducing memory cache for disks with windows Vista.

Some time later, microsoft came up with Microsoft ReadyBoost (With windows Vista), readyboost relied on fast pen drives to cache the data from the spinning disk, it was not a very popular feature at the time for many reasons, the drawbacks is that they had to design it to be pulled out without affecting data integrity, making restrictions on the writing speed (Writethrough, can’t writeback), and still it was doing the stuff that RAM did perfectly. not to mention that affordable pen drives were not that fast to begin with.

Caching today.

As it is today, caching still makes sense, I would argue it makes more sense than ever, spinning hard disk drives are still much cheaper than SSDs, A good SSD, A 1 TB SSD from samsung is at around $340 for the EVO, and 460 for the pro (Jul 2017), compare that to the spinning disk, with a price tag averaging $40, and you will know that the difference is still around 10 fold, even more if you go up in size, So what do we do ?

The answer is cache the disk. now is a better time to use caching with super fast SSDs that employ wear leveling and are connected in a more stable and persistant connection (SATA inside the computer).

SSD caching On Windows.

On windows, the answer may be ISR (Intel Smart Responce), I have not tried it myself, but i have heard many good things about it, you get into your bios and set the disks are R.A.I.D, then use the Intel Management Engine software to cahce the spinning disk on the SSD, that simple.

I could almost swear INTEL had a software solution for this that was a bit pricy, but i can’t seem to find it, i remember watching a video about it many years ago.

In any case, I am not very experienced with windows, so I will just leave it here.

SSD disk caching on Linux

On Linux, there are many solutions, the one that i will be showing you how to use right now is bcache, because it is fast, efficient, and works on block devices.

So, I am assuming you have installed debian stretch (9), and you have logged in, and you have networking et al running, now, let us get to installing bcache, mind you, bcache has been part of the linux kernel since jessie or even before, so all you need is bcache-tools, in Jessie, you had to compile those with a few lines, in stretch, there is a package for it.

** BCACHE **

To help avoid the confusion, you can use your big hard disk before attaching an SSD, you can then, whenever you want, attach an SSD to it to start the performance gain.

Installing bcache tools in Debian Jessie (8)

** IF YOU ARE INSTALLING ON JESSIE, BCACHE TOOLS WERE NOT PACKAGED FOR JESSIE**

apt-get install git make gcc pkg-config uuid openssl util-linux uuid-dev libblkid-dev

git clone https://github.com/g2p/bcache-tools.git

cd /usr/src

cd bcache-tools

make

make install

** END OF FOR JESSIE **

Installing bcache tools in Debian Stretch (9)

apt-get install bcache-tools

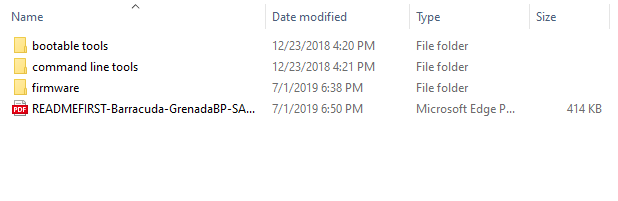

Planning how to setup the drives

In this article, i will be setting up 2 separate disks that are not system disks, one is a 4TB spinning disk, the other is a 1TB SSD, there are a few rules that you need to be aware of though

1- You can cache as many backing devices as you wish with one SSD

2- You can not cache one backing device with more than one SSD

3- There are memory requirements for bcache, so for example dropping the disks in a 486 computer with 256mb ram and using iscsi is not a good idea .

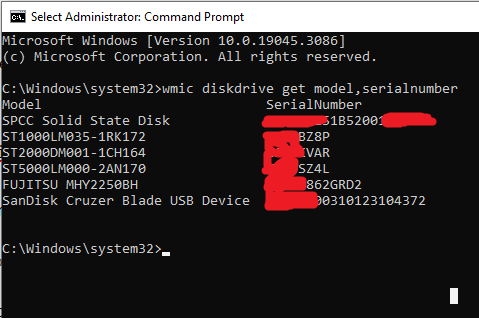

My setup

The backing device is your large spinning disk, the caching device is the SSD

My backing device is a 4TB hard drive that is connected as /dev/sde

My caching device is a 1TB samsung 850evo (alignment considerations here since it is a tlc disk (the pro is MLC, works like a regular with no alignment issues)), connected as /dev/sdc

Setting up the backing device (sde), mounting and populating it with data

You may want to start with the following command to clear any existing filesystem from the drives (Change SDE with your own drive designation)

wipefs -a /dev/sde

Now, let’s format SDE as backing, and SDC as caching

1- Run parted for backing device

parted /dev/sde

mklabel gpt

mkpart primary ext4 0% 100%

2- Make it a bcache backing partition

Using make-bcache, you will use the -B switch to tell the system that this is the backing device, meaning the spinning disk

make-bcache -B /dev/sde1

output from the above will be something like

UUID: 19d92bc8-8f49-479a-9480-33ca659b91b2

Set UUID: 0e3f386a-ec62-42b9-b0f3-025a09253946

version: 1

block_size: 1

data_offset: 16

3- Format it as ext4 or whatever filesystem you fancy

mkfs.ext4 /dev/bcache0

4- Mounting it like you would mount any other partition

mount /dev/bcache0 /hds/bcache0

5- If you like, you can now copy your data to it and get things ready before installing the caching device (before attaching the SSD as cache).

as i prefer to copy all the files to the spinning disk before attaching the SSD, since when we copy sequential, the SSD does not cache anyway, but the things it does cache are not the things we will use frequently, So i copy my files to it first, then i attach the SSD.

Setting up the caching device (sdc), then attaching it to the backing device

1- Create a partition on caching device (you chose the size you want to use as cache), but i would recommend that if you want to use the whole disk that you leave 10% unpartitioned for over-provisioning.

wipefs -a /dev/sdc

parted /dev/sdc

mklabel gpt

mkpart primary ext4 0% 90%

Using make-bcache, you will use the -C switch to tell the system that this is the caching device, meaning the solid state disk (SSD)

make-bcache -C /dev/sdc1

output from the above will be something like

UUID: eeda3570-eb1b-4983-8c53-76322a654585

Set UUID: 92dbf6ca-0f0b-44d5-b70e-8f1e7919838d

version: 0

nbuckets: 1716964

block_size: 1

bucket_size: 1024

nr_in_set: 1

nr_this_dev: 0

first_bucket: 1

Now, even if this is not for a technical purpose, just to give you the feel of this, try running the command below, the command should result in “no cache” because we did not attach a cache to it yet

cat /sys/block/bcache0/bcache/state

DO NOT Format the caching partition as ext4

this time, we won’t be formatting it in ext4 like the backing device above (think about it, the OS should see the backing device, and at some abstraction layer not even know about this one, so why would it have a file system other than the one that bcache itself understands), we will simply be attaching it to the disk.

Attaching the caching device

If you take a look at the result from make-bcache -C command, you will notice a Set UUID, we will need this unique ID to tell bcache what SSD to connect to what cache, the only cache we have so far is bcache0 as you can see from above, here is how we attach it.

echo 92dbf6ca-0f0b-44d5-b70e-8f1e7919838d > /sys/block/bcache0/bcache/attach

Now, if we run the command above again

cat /sys/block/bcache0/bcache/state

It should read “Clean” or “Dirty” instead of “no cache” (I would bet it reads clean at this stage), Depending on whether something has been written to it and still not in the backing device, or clean otherwise.

Setup all done, unless you want to fine tune it for your purpose, then read on.

Tuning the cache.

1- Caching mode

to inspect what caching mode we are using now

cat /sys/block/bcache0/bcache/cache_mode

Which will probably result in

[writethrough] writeback writearound none

By default, the system uses writethrough (better data integrity), but if you are like me, and have made 100% sure the electric won’t ever go down, or if you backup the data in real time, you might want to switch to writeback, writeback gives much faster write operations which is not necessarily a requierment for all applications.

echo writeback > /sys/block/bcache0/bcache/cache_mode

2- sequential read cutoff

The other thing you might wish to tune is the size of the sequential read/write cutoff, we want a size short enough to be worth caching, by default, it is 4MB, so that everything under 4MB sequential will be cached, I personally like to take that down to 1MB judging by the fact that files larger than 1MB do read pretty fast directly from the disk ! but surely, this will depend on your application and on experimentation with your application.

cache 1 megabyte and smaller

echo 1M > /sys/block/bcache0/bcache/sequential_cutoff

cache everything (special value, not the same mathematical logic of less than)

echo 0 > /sys/block/bcache0/bcache/sequential_cutoff

back to caching 4 mega bytes and smaller (default)

echo 4M > /sys/block/bcache0/bcache/sequential_cutoff

3- Percentage of dirty data to allow on SSD.

I personally like it the way it is (10% of the SSD’s size), but you can change that, and sometimes you have to temporarily change that for certain purposes)

Flush all dirty data to disk as soon as you can

echo 0 > /sys/block/bcache0/bcache/writeback_percent

Allow 10% dirty data

echo 10 > /sys/block/bcache0/bcache/writeback_percent

the first (Value 0) is very usefull when you want to disconnect the cache, to disconnect you want the dirty_data to be 0 on the SSD, so you can start by issuing the first line above, then as soon as all the data is flushed to the backing device, you can disconnect the SSD like i will be showing you further down.

Manipulating the setup

Sometimes, you want to change your SSD with a larger or smaller or newer one, other times, you want to disconnect it and use the backing device without a cahce, other times, you want to use the same caching device to cache more disks, here i will show you how

Assuming you want to disconnect the SSD, for this to happen, you will need to go through a couple of steps, first, make sure there is no dirty data, and second, detach it from the backing device

For the first step, we should inform bcache that we don’t want any dirty data, by default, bcache allows for 10% of the size of the SSD to be dirty data, we need to make that ZERO percent

echo 0 > /sys/block/bcache0/bcache/writeback_percent

remember, if you reattach or otherwise, you should set it back to ten percent in the same way

echo 10 > /sys/block/bcache0/bcache/writeback_percent

Monitoring cache and cache performance

1- How much dirty data is on the SSD, Assuming that “/sys/block/bcache0/bcache/state” reads dirty, you can see how much data is dirty with the command.

cat /sys/block/bcache0/bcache/dirty_data

2- Caching statistics

tail /sys/block/bcache0/bcache/stats_total/*